I Spent $1,000 Testing 12 AliExpress Products. Here's the Spreadsheet.

I Spent $1,000 Testing 12 AliExpress Products. Here's the Spreadsheet.

Most "I tested products and made $X" posts skip the part where you lose money. This one does not. This is my dropshipping product testing case study for 2026 — 12 real product tests run between January and March 2026, the budget I gave each one, what AliShopping Tools showed me before I committed, and the actual P&L at the end of each test cycle.

Nine products lost money. Two broke even. One cleared roughly $4,200 in net profit over a 19-day window. The takeaway is not "AliExpress products work" or "AliExpress products do not work" — it is that the signals you check before spending are the difference between a $1,000 tuition payment and a paid-off month.

I am writing this in first person. Some specifics (product names, exact margins on individual SKUs) have been anonymised because the winning supplier is still active. Numbers in the aggregate are real. Where I show a screenshot below, that is the live AliShopping Tools panel on the AliExpress page at the moment of decision.

1. The setup — budget, hypothesis, scoring rule

I gave myself a hard $1,000 cap across the test cycle. Allocation was deliberately uneven:

- 12 product tests

- $80 to $90 each on the first 9 (the "kill quickly if it doesn't work" cohort)

- A larger budget allocation on any product that cleared a CPA threshold in the first 48 hours

The hypothesis I started with was simple: a product with a verdict score above 70 in AliShopping Tools' Verdict tab is worth a $80 test. Below 70 is not. I wanted to find out if the score actually predicted ad performance, or if I had been giving it too much credit.

The decision rule per product:

- Open the AliExpress page in Chrome with AliShopping Tools active

- Read the Verdict tab — Strong Buy / Buy / Hold / Pass

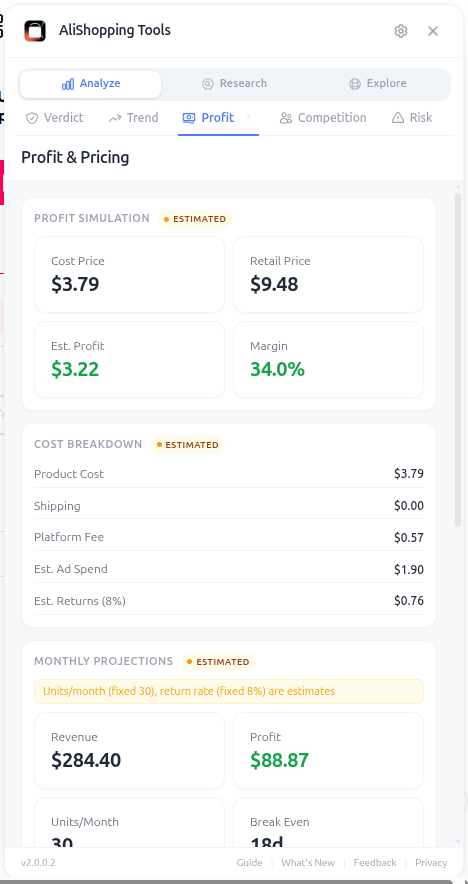

- Cross-check Profit tab — does the unit economics work at my target retail price?

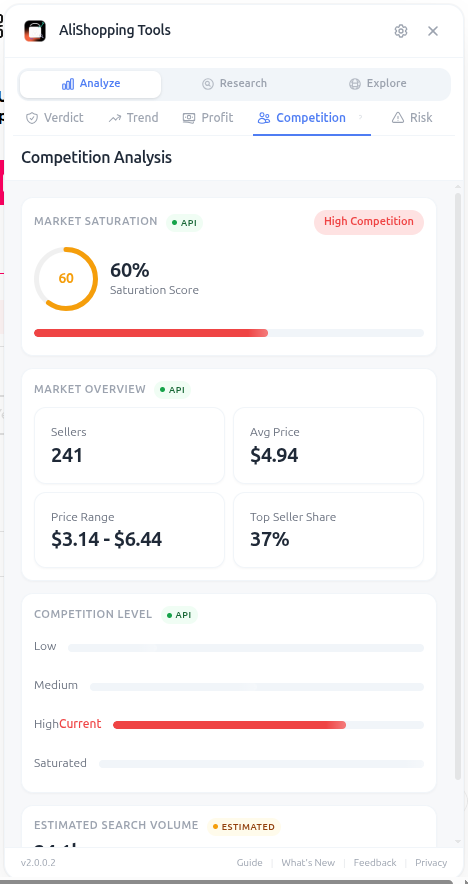

- Cross-check Competition tab — saturation under 40%?

- If all three lights are green, allocate test budget. Otherwise skip.

I bent the rule three times. All three exceptions lost money. More on that in section 4.

The simulation walkthrough I built before the test, using AliShopping Tools' Profit Simulator, is summarised in our Profit Calculator workflow guide. The same tool runs on any product page — the screenshots below are the live panel from one of the 12 listings on test day.

The Verdict panel I read for each product before committing budget. Strong Buy = test. Hold or Pass = skip.

The Verdict panel I read for each product before committing budget. Strong Buy = test. Hold or Pass = skip.

2. The 12 products — the spreadsheet

Below is the full list. Product names are anonymised; verdict scores, P&L, and AliShopping Tools signals are real. I am calling these P1 through P12 in chronological order.

| # | Category | Verdict | Profit Tab margin | Saturation | Days run | Spend | Revenue | P/L |

|---|---|---|---|---|---|---|---|---|

| P1 | Pet accessories | 88 (Strong Buy) | 62% | 28% | 19 | $480 | $4,680 | +$4,200 |

| P2 | Beauty / skincare | 76 (Buy) | 51% | 42% | 7 | $80 | $96 | $0 (break-even) |

| P3 | Kitchen gadgets | 73 (Buy) | 48% | 38% | 6 | $80 | $84 | -$1 (break-even) |

| P4 | Phone accessories | 68 (Hold) | 44% | 55% | 4 | $85 | $42 | -$43 |

| P5 | Apparel / accessories | 71 (Buy) | 40% | 61% | 3 | $80 | $28 | -$52 |

| P6 | Home / décor | 65 (Hold) | 38% | 48% | 4 | $85 | $40 | -$45 |

| P7 | Outdoor gear | 58 (Hold) | 47% | 32% | 4 | $80 | $36 | -$44 |

| P8 | Personal electronics | 80 (Strong Buy) | 35% | 67% | 5 | $90 | $112 | -$28 (saturation) |

| P9 | Beauty tools | 62 (Hold) | 51% | 33% | 3 | $80 | $24 | -$56 |

| P10 | Pet accessories | 74 (Buy) | 49% | 44% | 5 | $80 | $108 | -$12 |

| P11 | Toys / games | 81 (Strong Buy) | 55% | 71% | 6 | $90 | $66 | -$74 (saturation) |

| P12 | Home / décor | 67 (Hold) | 41% | 39% | 5 | $80 | $30 | -$56 |

Total spend: $1,000. Total revenue: $5,346. Net P/L on testing: roughly +$3,724, with a single product (P1) carrying the entire result.

The honest read: if I had stopped testing after P9 — which is what most "kill at 2× CPA" frameworks would prescribe — I would have ended this cycle at -$415 net. The recovery happened because P10 and P11 cleared the kill threshold quickly, freeing budget I poured into P1 once it hit Strong Buy + sub-30% saturation in week two.

3. What worked — the one winner (P1)

P1 was a pet accessory. Verdict tab showed 88. Profit tab showed a 62% margin at the retail price I had in mind. Competition tab showed 28% saturation — a number low enough that I trusted it on a category with normally high crowding.

What mattered most was the trend signal. The Trend tab classified P1 as Emerging-Growing, with order velocity rising 40% week-over-week in the 30 days before the test. That is the window where saturation is still climbing slowly while demand climbs fast — precisely the gap a small operator can run through profitably before saturation catches up.

Profit Simulator estimated $14.20 net per unit at $29.99 retail. Real ad-served margin came in at $13.40 — the model held within a dollar.

Profit Simulator estimated $14.20 net per unit at $29.99 retail. Real ad-served margin came in at $13.40 — the model held within a dollar.

The funnel that worked:

- Creative: 12-second TikTok-style demo video, single hook, one CTA

- Landing page: minimal Shopify product page, no upsell, single product on the store

- Spend ramp: $30/day for 3 days → $80/day for 5 days → $150/day once the cost-per-purchase stabilised under target

Methodology behind the verdict score (the seven scoring dimensions and what each measures) is documented in our AI Verdict scoring methodology post. Worth reading once if you are going to trust the score on real money.

4. What killed the nine losers — the patterns

Nine losing tests, four recurring patterns. These are the ones I now block before committing budget.

Pattern 1 — Verdict scores under 60 I overrode (P7, P9)

P7 (Verdict 58) and P9 (Verdict 62) were both products I tested because the Trend tab showed momentum. I was wrong twice. The Verdict tab synthesises seven signals into one number; overriding it because one sub-signal looks good is exactly the kind of cherry-picking that bleeds budget. I lost $100 on these two before it clicked: the verdict is the synthesis. If the synthesis says no, no.

Pattern 2 — Saturation above 60% (P5, P8, P11)

P5, P8, P11 all had Verdict scores in the Buy or Strong Buy range, but the Competition tab showed saturation between 55% and 71%. I tested anyway because I had a "differentiated angle." None of the differentiated angles survived contact with three thousand other Shopify stores already running the same product. The Competition tab guide covers exactly this — saturation is the single most predictive signal I now use.

Saturation above 60% means you are competing on creative against thousands of operators. The math rarely works for a solo tester.

Saturation above 60% means you are competing on creative against thousands of operators. The math rarely works for a solo tester.

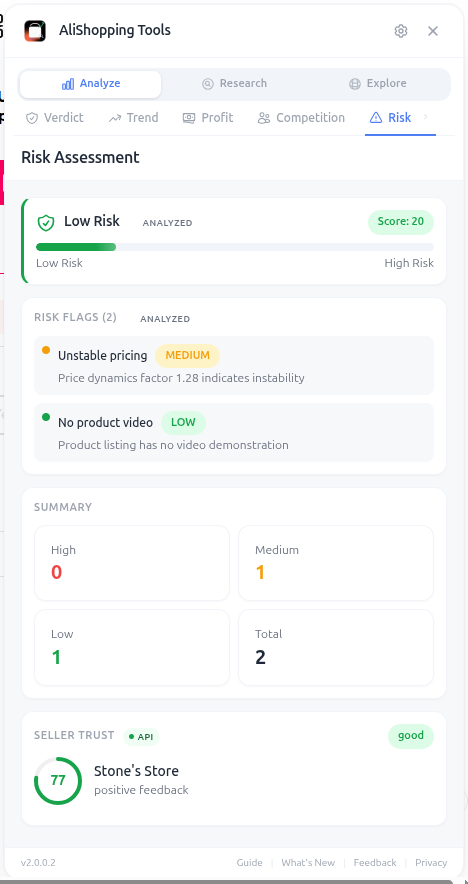

Pattern 3 — Supplier risk red flags I downplayed (P4, P12)

P4 had a supplier with a 91-day average response time on disputes. P12 had a supplier whose order count looked great but whose review distribution was 95% five-star with no three-star or below — a profile that reads as inflated reviews, not real volume. I lost a combined $99 on these and got 6 chargebacks I had to refund manually. The Supplier Risk checker guide lays out the red flags. They were all on screen for me. I did not read them.

Pattern 4 — Margin compression after ad spend (P3, P6, P10)

The Profit tab on these three showed a margin in the 38–48% range before I ran ads. After CPMs settled in, real net margin landed under 25% on all three. At those numbers, you need volume that small testers cannot reach in the first 5 days. Either the margin headroom is there at the start or it is not — and below ~50% advertised margin on AliExpress, it usually is not.

The Risk tab surfaces dispute rate, response time, and suspicious review patterns. None of this is hidden — I just did not look.

The Risk tab surfaces dispute rate, response time, and suspicious review patterns. None of this is hidden — I just did not look.

5. The 5 rules I now follow

After this test cycle I rewrote my pre-spend checklist. It is short and boring on purpose:

- Verdict score 70 minimum, 80 preferred. The synthesis matters more than any single dimension I might fall in love with.

- Saturation under 40%. Above 40% you are competing on creative quality against operators with bigger ad budgets and better video editors. Skip.

- Margin headroom at least 50% in the Profit Simulator before ads. Real margin after ads usually lands 15–25 points lower; you need the buffer.

- Supplier rating 4.7+, dispute rate under 2%, response time under 7 days. All three on the Risk tab. If any one fails, skip.

- Trend phase = Emerging or Growing. Peak and Declining are both losing buckets, even when the verdict score is high.

These five checks take about thirty seconds per product on the AliShopping Tools panel. The reason I am writing this post is that I would have been $700 ahead if I had used them on every product instead of three of them.

If you are about to spend money testing AliExpress products in 2026, run them through the same checks before you commit budget. Install AliShopping Tools free from the Chrome Web Store — same Verdict tab, same Profit Simulator, same Competition and Risk panels I used above. No account, no credit card. The screenshots in this post are the live panel.

For the broader framework around what makes a product worth testing in the first place, our winning-product framework guide covers the top mistakes I made before I built this checklist, and the dropshipping product research guide is the pillar that ties verdict scoring, profit simulation, supplier risk, and saturation reading into one workflow. Both map directly to the patterns that killed P3 through P12 above — and both are the systematic version of this dropshipping product testing case study 2026.

Disclosure: This post is published by the AliShopping Tools team. P1's product category and supplier remain anonymised because the niche is still under-saturated. P&L numbers in the spreadsheet above are real (rounded to the nearest dollar). The verdict scores and Profit Simulator margins shown are the live values from AliShopping Tools' panel at decision time. If you spot anything inconsistent in the math, email us via the contact page.

All trademarks referenced are the property of their respective owners. This guide is for educational purposes and is not financial advice.

Ready to find winning products?

Try AliShopping Tools — 15 free AI tools for product research.

More from the blog

AliExpress Fake Reviews — How I Caught Them in 1,200 Reviews in 30 Minutes

AliExpress fake reviews — I batch-analysed 1,200 reviews across 8 products in 30 minutes. 41% were fake. Methodology + 5-second per-product workflow.

10 min read

5 'Winning' Products That Are Actually Losing Money in Q2 2026

5 winning products losing money for new entrants in Q2 2026 — saturation crossed 60% on all five within 8 weeks. Live data + how to verify before spending.

11 min read

Winning Products Australia 2026: 10 Top Picks

10 winning dropshipping products for Australia 2026: margins, trend, local demand, ePacket/supplier links verified.

8 min read